Nov 19, 2019

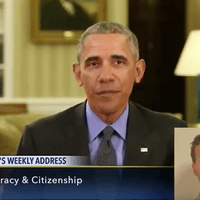

Today, we're re-releasing an old episode about how hard it is getting to decipher fact from fiction. Because next week, we’ll be putting out a story showing what happens when certain reality-altering tools get released into the wild.

Simon Adler takes us down a technological rabbit hole of strangely contorted faces and words made out of thin air. And a wonderland full of computer scientists, journalists, and digital detectives forces us to rethink even the things we see with our very own eyes.

Oh, and by the way, we decided to put the dark secrets we learned into action, and unleash this on the internet.

Reported by Simon Adler. Produced by Simon Adler and Annie McEwen.

Special thanks to everyone on the University of Southern California team who helped out with the facial manipulation: Kyle Olszewski, Koki Nagano, Ronald Yu, Yi Zhou, Jaewoo Seo, Shunsuke Saito, and Hao Li. Check out more of their work pinscreen.com

Special thanks also to Matthew Aylett, Supasorn Suwajanakorn, Rachel Axler, Angus Kneale, David Carroll, Amy Pearl and Nick Bilton. You can check out Nick’s latest book, American Kingpin, here.

Support Radiolab by becoming a member today at Radiolab.org/donate.